[1] 0.655[1] 0.25Production, i.e. firms

Consumption, i.e. product variety

Labour pool, i.e. skills in labour market

In general is a good thing for:

urban economies

productivity

urban and industrial agglomeration

Within-sector or Marshall–Arrow–Romer (MAR) spillovers

Between-sector or Jacobs spillovers

Large empirical literature trying to identify the optimal ratio, e.g. Saviotti and Frenken (2008) and Caragliu, Dominicis, and Groot (2016)

MAR externalities (or spillovers): good for productivity and short-term growth

Jacobean externalities: good for innovation and long-term growth

Using more clear economics terminology (Fujita et al. 1989):

Diverse cities (heterogeneous agglomerations) enjoy economies of scope

Homogeneous agglomeration enjoy increasing returns from economies of scale

Ambiguous concepts

Variety, diversity, difference: a relative concept of agglomeration and the clustering of activities

Not only higher ‘abundance’, ‘difference’ or ‘number’, but also the degrees of ‘richness’, ‘concentration’ or ‘evenness’ (Yuo and Tseng 2021)

Different ways to measure (Bettencourt 2021)

… aka variety

\(D = \sum_{i}^n p_{i}^0\)

\(p_i\) is the proportion of data points in the \(i\)th category

\(n\) is the number of total categories

A count of different species / categories / …

Interpretation:

Plurality

Availability of options

\(H = -\sum_{i}^n p_{i} \ln{p_{i}}\)

\(n\) is the number of total categories

\(p_i\) is the proportion of data points in the \(i\)th category

Probably the most common diversity index.

Interpretation:

If one category dominates ➔ less surprise ➔ low entropy

No category dominates ➔ more surprise ➔ high entropy

\(HHI = \sum_{i}^{n}(p_{i}^2)\)

\(p_i\) is the proportion of data points in the \(i\)th category

Concentration of the market.

Interpretation:

\(1/n \leq HHI \leq 1\)

Two scenarios:

Caution: alternative specification

\(HHI = 1- \sum_{i}^{n}(p_{i}^2)\)

Relatedness spans the continuum between MAR and Jacobs (Hidalgo 2021)

Related activities are neither exactly the same nor completely different (Frenken, Van Oort, and Verburg 2007; Boschma et al. 2012)

Why? Because:

identical activities compete for customers and resources,

no learning between very dissimilar economic activities

Large scale fine-grained data on economic activities

Learn about abstract factors of production and the way they combine into outputs

Dimensionality reduction techniques to data on the geography of activities, e.g. employment by industry or patents by technology

Machine learning and network techniques to predict and explain the economic trajectories of countries, cities and regions

For a review, check Hidalgo (2021) and Balland et al. (2022).

Source: Companies House

Go to data.london.gov.uk

Download and and save locally the Businesses-in-London.csv

Make sure you know the file location!

We will use the REAT and entropy packages. Check what these packages do here and here.

Install them if needed with install.packages("packagename")

library(tidyverse) # for data wrangling

library(rprojroot) # for relative paths

library(REAT) # for diversity measures

library(entropy) # for entropy

library(cluster) # for cluster analysis

library(factoextra) # help functions for clustering

library(kableExtra) # for nice html tables

library(dbscan) # for HDBSCAL

library(sf) # for mapping

# This is the project path

path <- find_rstudio_root_file()

path.data <- paste0(path, "/data/businesses-in-london.csv")

london.firms <- read_csv(path.data)

london.firms.sum <- london.firms %>%

filter(SICCode.SicText_1!="None Supplied") %>% # dropping NAs in essence

group_by(oslaua, SICCode.SicText_1) %>% # grouping by Local Authority and SIC code

summarise(n = n()) %>% # summarise: n is the number of firms per Local Authority and SIC code

mutate(total = sum(n), # total equal all firms

freq = n / total) %>% # just a frequency

group_by(oslaua) %>% # grouping again only by Local Authority

summarise(richness = n_distinct(SICCode.SicText_1), # the number of distinct SIC per Local Authority

entropy = entropy(freq, method = "ML"), # entropy for each Local Authority, we did the first group_by() and mutate() to be able to calculate freq so we can calculate entropy

herf = herf(n)) %>% # HHI for each local authority

arrange(-herf) # sort based on HHI (descending)

london.firms.sum %>% kbl() %>%

kable_styling(full_width = F, font_size = 12) %>% # A nice(r) table

scroll_box(width = "1200px", height = "300px")| oslaua | richness | entropy | herf |

|---|---|---|---|

| E09000013 | 602 | 4.637865 | 0.0301165 |

| E09000001 | 744 | 4.520774 | 0.0288396 |

| E09000033 | 827 | 4.607933 | 0.0258339 |

| E09000003 | 723 | 4.671225 | 0.0233361 |

| E09000008 | 678 | 4.786546 | 0.0221182 |

| E09000027 | 558 | 4.712318 | 0.0220274 |

| E09000015 | 633 | 4.730619 | 0.0205194 |

| E09000010 | 667 | 4.790814 | 0.0204714 |

| E09000007 | 827 | 4.829186 | 0.0204085 |

| E09000020 | 577 | 4.774282 | 0.0196181 |

| E09000030 | 690 | 4.802693 | 0.0193494 |

| E09000025 | 623 | 4.816502 | 0.0188794 |

| E09000032 | 571 | 4.831936 | 0.0184425 |

| E09000026 | 630 | 4.806874 | 0.0180738 |

| E09000012 | 729 | 4.915068 | 0.0178886 |

| E09000014 | 595 | 4.830779 | 0.0178559 |

| E09000019 | 803 | 4.904964 | 0.0178424 |

| E09000006 | 599 | 4.833756 | 0.0177523 |

| E09000021 | 529 | 4.856868 | 0.0176720 |

| E09000004 | 569 | 4.849445 | 0.0176348 |

| E09000024 | 582 | 4.860828 | 0.0168338 |

| E09000018 | 603 | 4.906424 | 0.0163947 |

| E09000029 | 543 | 4.879529 | 0.0162662 |

| E09000022 | 594 | 4.925276 | 0.0157286 |

| E09000017 | 640 | 4.960676 | 0.0153900 |

| E09000005 | 644 | 4.958913 | 0.0146799 |

| E09000016 | 564 | 4.936603 | 0.0145663 |

| E09000028 | 681 | 4.996227 | 0.0145643 |

| E09000002 | 543 | 4.916217 | 0.0143203 |

| E09000009 | 645 | 4.992793 | 0.0142574 |

| E09000031 | 587 | 4.977460 | 0.0136438 |

| E09000011 | 565 | 4.995980 | 0.0136430 |

| E09000023 | 540 | 5.008342 | 0.0130329 |

Tip

You don’t know what local authorities these codes refer to. You should download the codes and names and join them with your data from here.

Tip

Discuss what we can learn from this exercise.

Can you think of a way to understand how different these indices are among London’s Local Authorities?

path.shape <- paste0(path, "/data/Local_Authority_Districts_(May_2021)_UK_BFE.geojson")

london <- st_read(path.shape, quiet = T) %>%

dplyr::filter(LAD21CD %in% (london.firms$oslaua)) # Pay attention on the %in% operator. It means 'within'.

london <- merge(london, london.firms.sum, by.x = "LAD21CD", by.y = "oslaua" )

ggplot() +

geom_sf(data = london, aes(fill = entropy), color = NA) +

labs(

title = "Business diversity in London' Local Autorities",

fill = "Entropy") +

scale_fill_viridis_c() +

theme_void() +

theme(plot.title = element_text(hjust = 0.5)) # centres the titleReducing the dimensions of the observation space

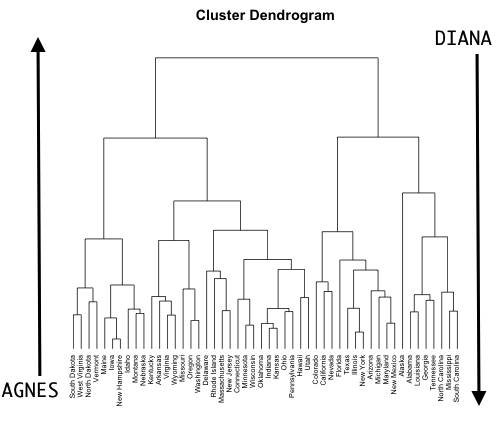

Classification of observations into (exclusive) groups

Distance or (dis)similarity between each pair of observations to create a distance or dissimilarity or matrix

Observations within the same group are as similar as possible

Plenty of other resources online and in textbooks

Source: medium.com

k-means

Hierarchical clustering

k is the number of clusters and is pre-defined

The algorithm selects k random observations (starting centres)

The remaining observations are assigned to the nearest centre

Recalculates the new centres

Re-check cluster assignment

Iterative process to minimise within-cluster variation until convergence

\(SS_{within} = \sum_{k=1}^k W(C_{k}) = \sum_{k=1}^k \sum_{x_i\in C_K}(x_i-\mu_k)^2\)

First, create an appropriate data frame:

la.sic <- london.firms %>%

filter(SICCode.SicText_1!="None Supplied") %>% # Drop firms which haven't declared SIC code

group_by(oslaua, SICCode.SicText_1) %>% # Group by Local Authorities and SIC code

summarise(n = n()) %>% # Summarise; n = number of observations

mutate(total = sum(n), # New column: total number of observations

freq = n / total) %>% # New column: frequency

arrange(oslaua,-n) %>% # Just arrange by Local Authority and descenting order of n

select(-n, -total) %>% # Drop n and total, we don't need them any more.

pivot_wider(names_from = SICCode.SicText_1, values_from = freq) %>% # Data transformation: from long to wide. Have a look: https://tidyr.tidyverse.org/reference/pivot_wider.html

replace(is.na(.), 0) # Replace any missing values with 0 as missing value represent SIC codes with 0 frequency

la.sic %>%

select(1:20) %>% # Select the first 20 columns as there 1037 in total

kbl() %>%

kable_styling(full_width = F, font_size = 11) %>% # Nice(r) table

scroll_box(width = "1200px", height = "800px")| oslaua | 82990 - Other business support service activities n.e.c. | 64209 - Activities of other holding companies n.e.c. | 70229 - Management consultancy activities other than financial management | 64999 - Financial intermediation not elsewhere classified | 99999 - Dormant Company | 74990 - Non-trading company | 70100 - Activities of head offices | 68209 - Other letting and operating of own or leased real estate | 62020 - Information technology consultancy activities | 68100 - Buying and selling of own real estate | 65120 - Non-life insurance | 96090 - Other service activities n.e.c. | 41100 - Development of building projects | 62012 - Business and domestic software development | 62090 - Other information technology service activities | 35110 - Production of electricity | 64205 - Activities of financial services holding companies | 74909 - Other professional, scientific and technical activities n.e.c. | 65110 - Life insurance |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| E09000001 | 0.1108145 | 0.0606884 | 0.0401823 | 0.0379038 | 0.0363252 | 0.0361787 | 0.0271462 | 0.0261046 | 0.0247376 | 0.0239889 | 0.0239238 | 0.0232403 | 0.0208642 | 0.0198714 | 0.0193018 | 0.0183579 | 0.0161282 | 0.0155586 | 0.0117829 |

| E09000002 | 0.0270344 | 0.0027363 | 0.0241340 | 0.0024626 | 0.0061840 | 0.0026816 | 0.0013681 | 0.0173480 | 0.0254474 | 0.0313030 | 0.0001095 | 0.0348602 | 0.0163629 | 0.0068954 | 0.0107262 | 0.0000547 | 0.0004378 | 0.0078258 | 0.0000547 |

| E09000003 | 0.0600694 | 0.0131911 | 0.0403222 | 0.0081328 | 0.0314536 | 0.0152407 | 0.0037313 | 0.0658109 | 0.0258041 | 0.0686751 | 0.0003942 | 0.0442374 | 0.0313223 | 0.0093678 | 0.0109181 | 0.0010511 | 0.0012876 | 0.0106816 | 0.0003022 |

| E09000004 | 0.0714510 | 0.0073915 | 0.0429860 | 0.0039316 | 0.0150975 | 0.0074963 | 0.0025163 | 0.0291466 | 0.0347033 | 0.0404173 | 0.0004718 | 0.0300377 | 0.0214406 | 0.0071818 | 0.0114280 | 0.0004194 | 0.0004194 | 0.0112183 | 0.0001573 |

| E09000005 | 0.0357725 | 0.0060626 | 0.0302829 | 0.0057610 | 0.0234964 | 0.0061229 | 0.0027749 | 0.0451228 | 0.0273572 | 0.0451529 | 0.0002111 | 0.0339326 | 0.0193039 | 0.0080533 | 0.0110394 | 0.0003318 | 0.0006937 | 0.0120951 | 0.0000603 |

| E09000006 | 0.0475336 | 0.0079285 | 0.0482441 | 0.0065073 | 0.0258798 | 0.0078537 | 0.0035529 | 0.0407644 | 0.0376977 | 0.0391937 | 0.0012716 | 0.0269270 | 0.0253562 | 0.0119675 | 0.0129773 | 0.0002618 | 0.0007854 | 0.0157448 | 0.0001870 |

| E09000007 | 0.0541925 | 0.0147704 | 0.0451042 | 0.0091985 | 0.0176188 | 0.0095582 | 0.0063354 | 0.0248425 | 0.0352450 | 0.0353404 | 0.0002790 | 0.0431734 | 0.0260612 | 0.0269274 | 0.0163561 | 0.0019454 | 0.0036706 | 0.0121643 | 0.0001688 |

| E09000008 | 0.0358566 | 0.0042869 | 0.0328511 | 0.0057082 | 0.0886745 | 0.0097621 | 0.0027492 | 0.0308940 | 0.0316861 | 0.0352275 | 0.0002563 | 0.0260246 | 0.0205261 | 0.0105543 | 0.0156800 | 0.0003728 | 0.0027026 | 0.0091098 | 0.0002097 |

| E09000009 | 0.0325698 | 0.0062051 | 0.0333419 | 0.0044036 | 0.0177290 | 0.0084928 | 0.0036602 | 0.0394327 | 0.0277087 | 0.0445226 | 0.0002002 | 0.0352864 | 0.0220468 | 0.0095222 | 0.0106374 | 0.0002860 | 0.0011152 | 0.0100369 | 0.0000858 |

| E09000010 | 0.0361647 | 0.0106993 | 0.0303793 | 0.0056159 | 0.0855462 | 0.0089807 | 0.0024207 | 0.0462347 | 0.0218102 | 0.0379076 | 0.0004115 | 0.0357532 | 0.0213987 | 0.0127811 | 0.0115466 | 0.0002421 | 0.0017913 | 0.0091501 | 0.0002421 |

| E09000011 | 0.0352018 | 0.0036909 | 0.0420300 | 0.0038754 | 0.0134717 | 0.0044752 | 0.0021223 | 0.0251903 | 0.0373702 | 0.0333564 | 0.0003691 | 0.0352480 | 0.0183622 | 0.0120877 | 0.0130104 | 0.0004614 | 0.0014302 | 0.0120415 | 0.0000923 |

| E09000012 | 0.0512285 | 0.0097657 | 0.0496767 | 0.0063622 | 0.0329695 | 0.0031242 | 0.0022862 | 0.0412559 | 0.0384524 | 0.0476801 | 0.0002897 | 0.0205038 | 0.0148865 | 0.0235245 | 0.0167486 | 0.0015621 | 0.0025449 | 0.0118450 | 0.0000621 |

| E09000013 | 0.0423722 | 0.0123893 | 0.0448190 | 0.0076899 | 0.0190306 | 0.0101755 | 0.0087774 | 0.1304179 | 0.0213220 | 0.0310704 | 0.0001554 | 0.0332453 | 0.0167780 | 0.0116126 | 0.0129719 | 0.0013982 | 0.0015147 | 0.0148361 | 0.0000388 |

| E09000014 | 0.0339895 | 0.0056534 | 0.0407460 | 0.0045848 | 0.0256127 | 0.0055500 | 0.0031370 | 0.0527423 | 0.0272674 | 0.0578096 | 0.0001379 | 0.0438829 | 0.0167879 | 0.0113758 | 0.0085491 | 0.0003792 | 0.0009307 | 0.0091696 | 0.0000345 |

| E09000015 | 0.0486010 | 0.0124162 | 0.0466257 | 0.0083570 | 0.0189498 | 0.0074019 | 0.0031692 | 0.0576744 | 0.0423495 | 0.0634049 | 0.0002605 | 0.0342313 | 0.0266123 | 0.0127418 | 0.0140876 | 0.0002171 | 0.0012373 | 0.0184940 | 0.0001954 |

| E09000016 | 0.0449873 | 0.0058253 | 0.0335324 | 0.0070981 | 0.0186019 | 0.0052379 | 0.0017623 | 0.0327981 | 0.0270217 | 0.0414137 | 0.0008811 | 0.0315743 | 0.0251126 | 0.0092520 | 0.0097415 | 0.0000979 | 0.0009301 | 0.0105248 | 0.0000979 |

| E09000017 | 0.0340671 | 0.0094125 | 0.0317595 | 0.0047062 | 0.0160012 | 0.0068316 | 0.0052528 | 0.0389252 | 0.0357674 | 0.0526188 | 0.0003036 | 0.0352513 | 0.0235616 | 0.0123273 | 0.0135722 | 0.0002429 | 0.0015181 | 0.0123577 | 0.0002429 |

| E09000018 | 0.0348997 | 0.0088552 | 0.0347881 | 0.0054322 | 0.0130223 | 0.0102690 | 0.0069576 | 0.0298396 | 0.0549540 | 0.0416341 | 0.0002232 | 0.0361275 | 0.0193474 | 0.0161848 | 0.0141385 | 0.0004093 | 0.0013766 | 0.0104178 | 0.0001488 |

| E09000019 | 0.0509646 | 0.0133515 | 0.0490896 | 0.0090981 | 0.0198825 | 0.0094630 | 0.0062919 | 0.0248783 | 0.0370846 | 0.0317616 | 0.0005537 | 0.0470006 | 0.0223615 | 0.0277725 | 0.0190771 | 0.0017617 | 0.0040646 | 0.0131753 | 0.0002139 |

| E09000020 | 0.0499206 | 0.0187149 | 0.0547281 | 0.0133494 | 0.0227926 | 0.0141649 | 0.0104735 | 0.0411641 | 0.0188866 | 0.0365712 | 0.0002575 | 0.0403056 | 0.0202601 | 0.0148517 | 0.0121475 | 0.0031335 | 0.0041207 | 0.0146371 | 0.0000000 |

| E09000021 | 0.0478516 | 0.0081613 | 0.0512695 | 0.0039063 | 0.0151367 | 0.0095564 | 0.0043248 | 0.0353655 | 0.0399693 | 0.0358538 | 0.0006975 | 0.0272740 | 0.0177176 | 0.0142997 | 0.0145787 | 0.0002790 | 0.0014648 | 0.0150670 | 0.0002790 |

| E09000022 | 0.0335485 | 0.0086577 | 0.0420129 | 0.0044448 | 0.0180111 | 0.0090055 | 0.0052951 | 0.0372976 | 0.0252773 | 0.0306497 | 0.0001546 | 0.0331620 | 0.0143006 | 0.0117497 | 0.0112472 | 0.0028215 | 0.0009276 | 0.0134890 | 0.0000773 |

| E09000023 | 0.0320543 | 0.0035557 | 0.0378921 | 0.0041925 | 0.0113570 | 0.0044579 | 0.0016982 | 0.0268535 | 0.0312583 | 0.0294008 | 0.0000531 | 0.0311522 | 0.0145412 | 0.0110917 | 0.0118877 | 0.0001061 | 0.0013268 | 0.0110917 | 0.0000531 |

| E09000024 | 0.0354735 | 0.0083443 | 0.0490793 | 0.0051381 | 0.0251973 | 0.0069878 | 0.0037817 | 0.0349803 | 0.0517511 | 0.0415571 | 0.0005344 | 0.0318563 | 0.0206347 | 0.0218267 | 0.0152088 | 0.0011098 | 0.0009865 | 0.0107284 | 0.0001644 |

| E09000025 | 0.0443489 | 0.0029330 | 0.0243489 | 0.0040202 | 0.0653097 | 0.0060430 | 0.0018710 | 0.0201770 | 0.0310999 | 0.0305689 | 0.0001517 | 0.0287737 | 0.0136030 | 0.0141087 | 0.0113780 | 0.0001264 | 0.0010619 | 0.0068015 | 0.0001517 |

| E09000026 | 0.0483713 | 0.0060341 | 0.0313726 | 0.0041454 | 0.0177836 | 0.0118475 | 0.0019133 | 0.0443240 | 0.0351011 | 0.0608320 | 0.0003434 | 0.0384615 | 0.0226403 | 0.0095418 | 0.0106211 | 0.0001962 | 0.0008830 | 0.0094192 | 0.0001472 |

| E09000027 | 0.0492061 | 0.0080081 | 0.0828589 | 0.0082396 | 0.0301810 | 0.0121742 | 0.0049067 | 0.0392075 | 0.0431422 | 0.0453641 | 0.0007406 | 0.0244410 | 0.0208767 | 0.0152294 | 0.0154145 | 0.0006018 | 0.0010647 | 0.0200435 | 0.0002314 |

| E09000028 | 0.0530189 | 0.0184414 | 0.0437391 | 0.0094275 | 0.0362030 | 0.0226084 | 0.0137424 | 0.0285782 | 0.0310016 | 0.0226084 | 0.0004433 | 0.0282235 | 0.0125307 | 0.0165204 | 0.0143630 | 0.0043148 | 0.0039306 | 0.0142152 | 0.0001773 |

| E09000029 | 0.0425879 | 0.0045226 | 0.0459799 | 0.0059673 | 0.0157663 | 0.0069724 | 0.0035176 | 0.0339824 | 0.0466080 | 0.0415829 | 0.0005025 | 0.0298995 | 0.0246859 | 0.0138191 | 0.0140704 | 0.0002513 | 0.0020101 | 0.0120603 | 0.0000628 |

| E09000030 | 0.0652078 | 0.0159285 | 0.0484445 | 0.0199271 | 0.0342956 | 0.0172467 | 0.0089199 | 0.0253977 | 0.0509271 | 0.0293084 | 0.0007250 | 0.0367343 | 0.0160603 | 0.0187187 | 0.0393927 | 0.0019554 | 0.0097109 | 0.0105457 | 0.0001977 |

| E09000031 | 0.0310170 | 0.0067606 | 0.0308121 | 0.0036876 | 0.0120052 | 0.0036057 | 0.0014750 | 0.0304024 | 0.0251168 | 0.0375727 | 0.0002049 | 0.0402360 | 0.0259772 | 0.0095059 | 0.0092190 | 0.0006556 | 0.0009424 | 0.0093420 | 0.0000410 |

| E09000032 | 0.0381731 | 0.0072472 | 0.0655832 | 0.0074624 | 0.0180461 | 0.0091845 | 0.0041976 | 0.0402540 | 0.0311771 | 0.0355900 | 0.0003946 | 0.0311771 | 0.0202346 | 0.0123417 | 0.0119470 | 0.0008969 | 0.0018297 | 0.0158935 | 0.0000359 |

| E09000033 | 0.0871492 | 0.0369990 | 0.0475242 | 0.0180250 | 0.0727706 | 0.0228702 | 0.0137720 | 0.0385974 | 0.0192951 | 0.0375056 | 0.0004424 | 0.0307623 | 0.0292781 | 0.0150850 | 0.0143358 | 0.0047239 | 0.0074997 | 0.0153490 | 0.0001570 |

kclust is the object with the output of the \(k=10\) k-means function. After looking at its structure with str(kclust), there are two useful thing to do:

List of 9

$ cluster : int [1:33] 10 3 6 9 3 1 5 8 3 8 ...

$ centers : num [1:10, 1:1036] 0.0444 0.0443 0.0361 0.0591 0.0521 ...

..- attr(*, "dimnames")=List of 2

.. ..$ : chr [1:10] "1" "2" "3" "4" ...

.. ..$ : chr [1:1036] "82990 - Other business support service activities n.e.c." "64209 - Activities of other holding companies n.e.c." "70229 - Management consultancy activities other than financial management" "64999 - Financial intermediation not elsewhere classified" ...

$ totss : num 0.0945

$ withinss : num [1:10] 0.0042 0 0.00609 0.00112 0.0019 ...

$ tot.withinss: num 0.0235

$ betweenss : num 0.071

$ size : int [1:10] 6 1 8 2 3 3 1 2 5 2

$ iter : int 3

$ ifault : int 0

- attr(*, "class")= chr "kmeans"First, the list cluster contains the \(k = 10\) cluster solution. This is an array with a length of 33. The first entry is the cluster membership for the first LA, the second entry for the second LA etc.

Secondly, the list centers contains the centres of the new clusters. In other words, the average frequency of each SIC code (i.e. sector) for the 10 new clusters. centers is a \(10*1036\) table, with 1036 being the number of SIC codes. We can use these centres to create labels (aka pen portraits) for each cluster. These are, in essence, brief descriptions of the clusters.

kclust$centers %>% as.tibble() %>% # convert to tibble

mutate(cluster = (1:10)) %>% # create a Cluster ID column ...

relocate(cluster) %>% # ... and bring it in the first position

kbl() %>% # Create a nice(r) table

kable_styling(full_width = F, font_size = 11) %>%

scroll_box(width = "1200px", height = "800px")| cluster | 82990 - Other business support service activities n.e.c. | 64209 - Activities of other holding companies n.e.c. | 70229 - Management consultancy activities other than financial management | 64999 - Financial intermediation not elsewhere classified | 99999 - Dormant Company | 74990 - Non-trading company | 70100 - Activities of head offices | 68209 - Other letting and operating of own or leased real estate | 62020 - Information technology consultancy activities | 68100 - Buying and selling of own real estate | 65120 - Non-life insurance | 96090 - Other service activities n.e.c. | 41100 - Development of building projects | 62012 - Business and domestic software development | 62090 - Other information technology service activities | 35110 - Production of electricity | 64205 - Activities of financial services holding companies | 74909 - Other professional, scientific and technical activities n.e.c. | 65110 - Life insurance | 47910 - Retail sale via mail order houses or via Internet | 68320 - Management of real estate on a fee or contract basis | 78109 - Other activities of employment placement agencies | 66300 - Fund management activities | 66220 - Activities of insurance agents and brokers | 98000 - Residents property management | 63990 - Other information service activities n.e.c. | 59111 - Motion picture production activities | 46190 - Agents involved in the sale of a variety of goods | 66190 - Activities auxiliary to financial intermediation n.e.c. | 73110 - Advertising agencies | 86900 - Other human health activities | 69102 - Solicitors | 70221 - Financial management | 66290 - Other activities auxiliary to insurance and pension funding | 85590 - Other education n.e.c. | 56101 - Licensed restaurants | 90030 - Artistic creation | 46900 - Non-specialised wholesale trade | 58290 - Other software publishing | 69201 - Accounting and auditing activities | 64301 - Activities of investment trusts | 55100 - Hotels and similar accommodation | 61900 - Other telecommunications activities | 70210 - Public relations and communications activities | 78200 - Temporary employment agency activities | 59200 - Sound recording and music publishing activities | 93199 - Other sports activities | 64303 - Activities of venture and development capital companies | 43999 - Other specialised construction activities n.e.c. | 63110 - Data processing, hosting and related activities | 74100 - specialised design activities | 69109 - Activities of patent and copyright agents; other legal activities n.e.c. | 47710 - Retail sale of clothing in specialised stores | 63120 - Web portals | 94990 - Activities of other membership organizations n.e.c. | 85600 - Educational support services | 47990 - Other retail sale not in stores, stalls or markets | 59113 - Television programme production activities | 68310 - Real estate agencies | 58190 - Other publishing activities | 79110 - Travel agency activities | 41201 - Construction of commercial buildings | 71111 - Architectural activities | 64991 - Security dealing on own account | 82110 - Combined office administrative service activities | 06100 - Extraction of crude petroleum | 72110 - Research and experimental development on biotechnology | 66120 - Security and commodity contracts dealing activities | 90010 - Performing arts | 64922 - Activities of mortgage finance companies | 86220 - Specialists medical practice activities | 78300 - Human resources provision and management of human resources functions | 72190 - Other research and experimental development on natural sciences and engineering | 41202 - Construction of domestic buildings | 64304 - Activities of open-ended investment companies | 58110 - Book publishing | 62011 - Ready-made interactive leisure and entertainment software development | 90020 - Support activities to performing arts | 71122 - Engineering related scientific and technical consulting activities | 56302 - Public houses and bars | 59112 - Video production activities | 71129 - Other engineering activities | 88990 - Other social work activities without accommodation n.e.c. | 64306 - Activities of real estate investment trusts | 94120 - Activities of professional membership organizations | 96020 - Hairdressing and other beauty treatment | 50200 - Sea and coastal freight water transport | 46180 - Agents specialized in the sale of other particular products | 47190 - Other retail sale in non-specialised stores | 56290 - Other food services | 64202 - Activities of production holding companies | 32990 - Other manufacturing n.e.c. | 64191 - Banks | 73120 - Media representation services | 93290 - Other amusement and recreation activities n.e.c. | 52290 - Other transportation support activities | 66110 - Administration of financial markets | 09100 - Support activities for petroleum and natural gas extraction | 68201 - Renting and operating of Housing Association real estate | 64910 - Financial leasing | 86101 - Hospital activities | 46420 - Wholesale of clothing and footwear | 56103 - Take-away food shops and mobile food stands | 7499 - Non-trading company | 94110 - Activities of business and employers membership organizations | 43210 - Electrical installation | 43390 - Other building completion and finishing | 56102 - Unlicensed restaurants and cafes | 65300 - Pension funding | 58142 - Publishing of consumer and business journals and periodicals | 69101 - Barristers at law | 46342 - Wholesale of wine, beer, spirits and other alcoholic beverages | 55900 - Other accommodation | 64929 - Other credit granting n.e.c. | 49410 - Freight transport by road | 87100 - Residential nursing care facilities | 74901 - Environmental consulting activities | 61200 - Wireless telecommunications activities | 7487 - Other business activities | 08990 - Other mining and quarrying n.e.c. | 73200 - Market research and public opinion polling | 46510 - Wholesale of computers, computer peripheral equipment and software | 69203 - Tax consultancy | 61100 - Wired telecommunications activities | 14190 - Manufacture of other wearing apparel and accessories n.e.c. | 43290 - Other construction installation | 18129 - Printing n.e.c. | 71121 - Engineering design activities for industrial process and production | 46450 - Wholesale of perfume and cosmetics | 86210 - General medical practice activities | 46160 - Agents involved in the sale of textiles, clothing, fur, footwear and leather goods | 46460 - Wholesale of pharmaceutical goods | 47750 - Retail sale of cosmetic and toilet articles in specialised stores | 80100 - Private security activities | 92000 - Gambling and betting activities | 64921 - Credit granting by non-deposit taking finance houses and other specialist consumer credit grantors | 77351 - Renting and leasing of air passenger transport equipment | 81100 - Combined facilities support activities | 79120 - Tour operator activities | 93130 - Fitness facilities | 42990 - Construction of other civil engineering projects n.e.c. | 47789 - Other retail sale of new goods in specialised stores (not commercial art galleries and opticians) | 56210 - Event catering activities | 65202 - Non-life reinsurance | 69202 - Bookkeeping activities | 07290 - Mining of other non-ferrous metal ores | 45200 - Maintenance and repair of motor vehicles | 77400 - Leasing of intellectual property and similar products, except copyright works | 85520 - Cultural education | 35210 - Manufacture of gas | 59120 - Motion picture, video and television programme post-production activities | 79909 - Other reservation service activities n.e.c. | 46520 - Wholesale of electronic and telecommunications equipment and parts | 43220 - Plumbing, heat and air-conditioning installation | 66210 - Risk and damage evaluation | 87300 - Residential care activities for the elderly and disabled | 45112 - Sale of used cars and light motor vehicles | 47770 - Retail sale of watches and jewellery in specialised stores | 47781 - Retail sale in commercial art galleries | 71200 - Technical testing and analysis | 35140 - Trade of electricity | 60200 - Television programming and broadcasting activities | 85320 - Technical and vocational secondary education | 86230 - Dental practice activities | 46499 - Wholesale of household goods (other than musical instruments) n.e.c. | 55209 - Other holiday and other collective accommodation | 90040 - Operation of arts facilities | 32500 - Manufacture of medical and dental instruments and supplies | 45310 - Wholesale trade of motor vehicle parts and accessories | 46170 - Agents involved in the sale of food, beverages and tobacco | 46320 - Wholesale of meat and meat products | 58210 - Publishing of computer games | 64203 - Activities of construction holding companies | 85310 - General secondary education | 46370 - Wholesale of coffee, tea, cocoa and spices | 46760 - Wholesale of other intermediate products | 47599 - Retail of furniture, lighting, and similar (not musical instruments or scores) in specialised store | 81210 - General cleaning of buildings | 94910 - Activities of religious organizations | 46690 - Wholesale of other machinery and equipment | 62030 - Computer facilities management activities | 10890 - Manufacture of other food products n.e.c. | 35130 - Distribution of electricity | 64204 - Activities of distribution holding companies | 7415 - Holding Companies including Head Offices | 93110 - Operation of sports facilities | 46140 - Agents involved in the sale of machinery, industrial equipment, ships and aircraft | 46150 - Agents involved in the sale of furniture, household goods, hardware and ironmongery | 47290 - Other retail sale of food in specialised stores | 74902 - Quantity surveying activities | 77390 - Renting and leasing of other machinery, equipment and tangible goods n.e.c. | 85200 - Primary education | 4521 - Gen construction & civil engineer | 46410 - Wholesale of textiles | 46480 - Wholesale of watches and jewellery | 46719 - Wholesale of other fuels and related products | 47640 - Retail sale of sports goods, fishing gear, camping goods, boats and bicycles | 99000 - Activities of extraterritorial organizations and bodies | 45111 - Sale of new cars and light motor vehicles | 82302 - Activities of conference organisers | 87900 - Other residential care activities n.e.c. | 46720 - Wholesale of metals and metal ores | 52230 - Service activities incidental to air transportation | 59131 - Motion picture distribution activities | 42220 - Construction of utility projects for electricity and telecommunications | 46390 - Non-specialised wholesale of food, beverages and tobacco | 77110 - Renting and leasing of cars and light motor vehicles | 09900 - Support activities for other mining and quarrying | 72200 - Research and experimental development on social sciences and humanities | 93120 - Activities of sport clubs | 21100 - Manufacture of basic pharmaceutical products | 21200 - Manufacture of pharmaceutical preparations | 46120 - Agents involved in the sale of fuels, ores, metals and industrial chemicals | 47110 - Retail sale in non-specialised stores with food, beverages or tobacco predominating | 52219 - Other service activities incidental to land transportation, n.e.c. | 61300 - Satellite telecommunications activities | 71112 - Urban planning and landscape architectural activities | 74300 - Translation and interpretation activities | 26110 - Manufacture of electronic components | 7414 - Business & management consultancy | 96040 - Physical well-being activities | 01110 - Growing of cereals (except rice), leguminous crops and oil seeds | 49420 - Removal services | 82301 - Activities of exhibition and fair organisers | 85510 - Sports and recreation education | 01500 - Mixed farming | 51102 - Non-scheduled passenger air transport | 52103 - Operation of warehousing and storage facilities for land transport activities | 52220 - Service activities incidental to water transportation | 58130 - Publishing of newspapers | 64201 - Activities of agricultural holding companies | 20590 - Manufacture of other chemical products n.e.c. | 25990 - Manufacture of other fabricated metal products n.e.c. | 26511 - Manufacture of electronic measuring, testing etc. equipment, not for industrial process control | 39000 - Remediation activities and other waste management services | 46310 - Wholesale of fruit and vegetables | 46380 - Wholesale of other food, including fish, crustaceans and molluscs | 47721 - Retail sale of footwear in specialised stores | 47749 - Retail sale of medical and orthopaedic goods in specialised stores (not incl. hearing aids) n.e.c. | 80200 - Security systems service activities | 80300 - Investigation activities | 26200 - Manufacture of computers and peripheral equipment | 28990 - Manufacture of other special-purpose machinery n.e.c. | 43330 - Floor and wall covering | 22290 - Manufacture of other plastic products | 25110 - Manufacture of metal structures and parts of structures | 33200 - Installation of industrial machinery and equipment | 46470 - Wholesale of furniture, carpets and lighting equipment | 47791 - Retail sale of antiques including antique books in stores | 49320 - Taxi operation | 7011 - Development & sell real estate | 85421 - First-degree level higher education | 31090 - Manufacture of other furniture | 32120 - Manufacture of jewellery and related articles | 46750 - Wholesale of chemical products | 49100 - Passenger rail transport, interurban | 11010 - Distilling, rectifying and blending of spirits | 26400 - Manufacture of consumer electronics | 27400 - Manufacture of electric lighting equipment | 35120 - Transmission of electricity | 38320 - Recovery of sorted materials | 56301 - Licensed clubs | 74202 - Other specialist photography | 81300 - Landscape service activities | 85100 - Pre-primary education | 10710 - Manufacture of bread; manufacture of fresh pastry goods and cakes | 11050 - Manufacture of beer | 38110 - Collection of non-hazardous waste | 50100 - Sea and coastal passenger water transport | 81222 - Specialised cleaning services | 14132 - Manufacture of other women's outerwear | 36000 - Water collection, treatment and supply | 46439 - Wholesale of radio, television goods & electrical household appliances (other than records, tapes, CD's & video tapes and the equipment used for playing them) | 46711 - Wholesale of petroleum and petroleum products | 47410 - Retail sale of computers, peripheral units and software in specialised stores | 81299 - Other cleaning services | 96010 - Washing and (dry-)cleaning of textile and fur products | 10390 - Other processing and preserving of fruit and vegetables | 27900 - Manufacture of other electrical equipment | 31010 - Manufacture of office and shop furniture | 42110 - Construction of roads and motorways | 45320 - Retail trade of motor vehicle parts and accessories | 47540 - Retail sale of electrical household appliances in specialised stores | 59132 - Video distribution activities | 64302 - Activities of unit trusts | 82200 - Activities of call centres | 01430 - Raising of horses and other equines | 20420 - Manufacture of perfumes and toilet preparations | 33190 - Repair of other equipment | 47250 - Retail sale of beverages in specialised stores | 49390 - Other passenger land transport | 51101 - Scheduled passenger air transport | 58141 - Publishing of learned journals | 59133 - Television programme distribution activities | 74201 - Portrait photographic activities | 82190 - Photocopying, document preparation and other specialised office support activities | 84110 - General public administration activities | 96030 - Funeral and related activities | 27120 - Manufacture of electricity distribution and control apparatus | 43910 - Roofing activities | 47240 - Retail sale of bread, cakes, flour confectionery and sugar confectionery in specialised stores | 47610 - Retail sale of books in specialised stores | 53202 - Unlicensed carrier | 60100 - Radio broadcasting | 74209 - Photographic activities not elsewhere classified | 77320 - Renting and leasing of construction and civil engineering machinery and equipment | 77330 - Renting and leasing of office machinery and equipment (including computers) | 78101 - Motion picture, television and other theatrical casting activities | 87200 - Residential care activities for learning difficulties, mental health and substance abuse | 02100 - Silviculture and other forestry activities | 06200 - Extraction of natural gas | 11070 - Manufacture of soft drinks; production of mineral waters and other bottled waters | 13990 - Manufacture of other textiles n.e.c. | 15120 - Manufacture of luggage, handbags and the like, saddlery and harness | 16230 - Manufacture of other builders' carpentry and joinery | 18130 - Pre-press and pre-media services | 29100 - Manufacture of motor vehicles | 30300 - Manufacture of air and spacecraft and related machinery | 38210 - Treatment and disposal of non-hazardous waste | 43320 - Joinery installation | 46110 - Agents selling agricultural raw materials, livestock, textile raw materials and semi-finished goods | 46130 - Agents involved in the sale of timber and building materials | 46341 - Wholesale of fruit and vegetable juices, mineral water and soft drinks | 46730 - Wholesale of wood, construction materials and sanitary equipment | 47650 - Retail sale of games and toys in specialised stores | 47820 - Retail sale via stalls and markets of textiles, clothing and footwear | 47890 - Retail sale via stalls and markets of other goods | 75000 - Veterinary activities | 85422 - Post-graduate level higher education | 88100 - Social work activities without accommodation for the elderly and disabled | 14131 - Manufacture of other men's outerwear | 20130 - Manufacture of other inorganic basic chemicals | 32409 - Manufacture of other games and toys, n.e.c. | 33140 - Repair of electrical equipment | 43341 - Painting | 45190 - Sale of other motor vehicles | 46220 - Wholesale of flowers and plants | 47510 - Retail sale of textiles in specialised stores | 47730 - Dispensing chemist in specialised stores | 47782 - Retail sale by opticians | 47799 - Retail sale of other second-hand goods in stores (not incl. antiques) | 55201 - Holiday centres and villages | 65201 - Life reinsurance | 84120 - Regulation of health care, education, cultural and other social services, not incl. social security | 84230 - Justice and judicial activities | 9305 - Other service activities n.e.c. | 97000 - Activities of households as employers of domestic personnel | 03110 - Marine fishing | 10832 - Production of coffee and coffee substitutes | 17120 - Manufacture of paper and paperboard | 20140 - Manufacture of other organic basic chemicals | 20411 - Manufacture of soap and detergents | 24410 - Precious metals production | 28290 - Manufacture of other general-purpose machinery n.e.c. | 32300 - Manufacture of sports goods | 35230 - Trade of gas through mains | 43342 - Glazing | 47429 - Retail sale of telecommunications equipment other than mobile telephones | 47722 - Retail sale of leather goods in specialised stores | 47760 - Retail sale of flowers, plants, seeds, fertilizers, pet animals and pet food in specialised stores | 49200 - Freight rail transport | 63910 - News agency activities | 64110 - Central banking | 6523 - Other financial intermediation | 86102 - Medical nursing home activities | 18203 - Reproduction of computer media | 19201 - Mineral oil refining | 29310 - Manufacture of electrical and electronic equipment for motor vehicles and their engines | 43120 - Site preparation | 43310 - Plastering | 46330 - Wholesale of dairy products, eggs and edible oils and fats | 46740 - Wholesale of hardware, plumbing and heating equipment and supplies | 47430 - Retail sale of audio and video equipment in specialised stores | 51210 - Freight air transport | 53201 - Licensed carriers | 55300 - Recreational vehicle parks, trailer parks and camping grounds | 77342 - Renting and leasing of freight water transport equipment | 82911 - Activities of collection agencies | 82920 - Packaging activities | 91030 - Operation of historical sites and buildings and similar visitor attractions | 01610 - Support activities for crop production | 02400 - Support services to forestry | 10821 - Manufacture of cocoa and chocolate confectionery | 10831 - Tea processing | 10840 - Manufacture of condiments and seasonings | 14120 - Manufacture of workwear | 15200 - Manufacture of footwear | 17230 - Manufacture of paper stationery | 18201 - Reproduction of sound recording | 20301 - Manufacture of paints, varnishes and similar coatings, mastics and sealants | 24450 - Other non-ferrous metal production | 25620 - Machining | 26120 - Manufacture of loaded electronic boards | 26512 - Manufacture of electronic industrial process control equipment | 28131 - Manufacture of pumps | 46210 - Wholesale of grain, unmanufactured tobacco, seeds and animal feeds | 46660 - Wholesale of other office machinery and equipment | 46770 - Wholesale of waste and scrap | 47620 - Retail sale of newspapers and stationery in specialised stores | 47630 - Retail sale of music and video recordings in specialised stores | 77341 - Renting and leasing of passenger water transport equipment | 84130 - Regulation of and contribution to more efficient operation of businesses | 84240 - Public order and safety activities | 84250 - Fire service activities | 85410 - Post-secondary non-tertiary education | 91012 - Archives activities | 95110 - Repair of computers and peripheral equipment | 08910 - Mining of chemical and fertilizer minerals | 10850 - Manufacture of prepared meals and dishes | 13100 - Preparation and spinning of textile fibres | 27110 - Manufacture of electric motors, generators and transformers | 27320 - Manufacture of other electronic and electric wires and cables | 42120 - Construction of railways and underground railways | 4525 - Other special trades construction | 46360 - Wholesale of sugar and chocolate and sugar confectionery | 46610 - Wholesale of agricultural machinery, equipment and supplies | 47220 - Retail sale of meat and meat products in specialised stores | 49319 - Other urban, suburban or metropolitan passenger land transport (not underground, metro or similar) | 64305 - Activities of property unit trusts | 6512 - Other monetary intermediation | 79901 - Activities of tourist guides | 84220 - Defence activities | 93191 - Activities of racehorse owners | 01130 - Growing of vegetables and melons, roots and tubers | 05102 - Open cast coal working | 10410 - Manufacture of oils and fats | 13300 - Finishing of textiles | 13921 - Manufacture of soft furnishings | 17290 - Manufacture of other articles of paper and paperboard n.e.c. | 20150 - Manufacture of fertilizers and nitrogen compounds | 22220 - Manufacture of plastic packing goods | 23190 - Manufacture and processing of other glass, including technical glassware | 23700 - Cutting, shaping and finishing of stone | 24100 - Manufacture of basic iron and steel and of ferro-alloys | 25610 - Treatment and coating of metals | 30110 - Building of ships and floating structures | 30120 - Building of pleasure and sporting boats | 33120 - Repair of machinery | 33150 - Repair and maintenance of ships and boats | 35220 - Distribution of gaseous fuels through mains | 3614 - Manufacture of other furniture | 43991 - Scaffold erection | 46431 - Wholesale of audio tapes, records, CDs and video tapes and the equipment on which these are played | 46650 - Wholesale of office furniture | 47210 - Retail sale of fruit and vegetables in specialised stores | 47530 - Retail sale of carpets, rugs, wall and floor coverings in specialised stores | 52101 - Operation of warehousing and storage facilities for water transport activities | 58120 - Publishing of directories and mailing lists | 74203 - Film processing | 88910 - Child day-care activities | 91020 - Museums activities | 91040 - Botanical and zoological gardens and nature reserves activities | 95210 - Repair of consumer electronics | 01280 - Growing of spices, aromatic, drug and pharmaceutical crops | 01300 - Plant propagation | 01410 - Raising of dairy cattle | 01629 - Support activities for animal production (other than farm animal boarding and care) n.e.c. | 01630 - Post-harvest crop activities | 01700 - Hunting, trapping and related service activities | 05101 - Deep coal mines | 07100 - Mining of iron ores | 07210 - Mining of uranium and thorium ores | 10612 - Manufacture of breakfast cereals and cereals-based food | 10860 - Manufacture of homogenized food preparations and dietetic food | 13950 - Manufacture of non-wovens and articles made from non-wovens, except apparel | 13960 - Manufacture of other technical and industrial textiles | 14142 - Manufacture of women's underwear | 18202 - Reproduction of video recording | 20200 - Manufacture of pesticides and other agrochemical products | 22110 - Manufacture of rubber tyres and tubes; retreading and rebuilding of rubber tyres | 2213 - Publish journals & periodicals | 23610 - Manufacture of concrete products for construction purposes | 26301 - Manufacture of telegraph and telephone apparatus and equipment | 26309 - Manufacture of communication equipment other than telegraph, and telephone apparatus and equipment | 27310 - Manufacture of fibre optic cables | 28110 - Manufacture of engines and turbines, except aircraft, vehicle and cycle engines | 28140 - Manufacture of taps and valves | 28150 - Manufacture of bearings, gears, gearing and driving elements | 28220 - Manufacture of lifting and handling equipment | 2852 - General mechanical engineering | 2875 - Manufacture other fabricated metal products | 29320 - Manufacture of other parts and accessories for motor vehicles | 30200 - Manufacture of railway locomotives and rolling stock | 30920 - Manufacture of bicycles and invalid carriages | 31020 - Manufacture of kitchen furniture | 33130 - Repair of electronic and optical equipment | 33160 - Repair and maintenance of aircraft and spacecraft | 33170 - Repair and maintenance of other transport equipment n.e.c. | 38220 - Treatment and disposal of hazardous waste | 46350 - Wholesale of tobacco products | 46620 - Wholesale of machine tools | 46630 - Wholesale of mining, construction and civil engineering machinery | 47421 - Retail sale of mobile telephones | 47591 - Retail sale of musical instruments and scores | 5190 - Other wholesale | 52243 - Cargo handling for land transport activities | 6024 - Freight transport by road | 64992 - Factoring | 68202 - Letting and operating of conference and exhibition centres | 7220 - Software consultancy and supply | 77291 - Renting and leasing of media entertainment equipment | 98200 - Undifferentiated service-producing activities of private households for own use | 9999 - Dormant company | 01250 - Growing of other tree and bush fruits and nuts | 01420 - Raising of other cattle and buffaloes | 01621 - Farm animal boarding and care | 10200 - Processing and preserving of fish, crustaceans and molluscs | 10730 - Manufacture of macaroni, noodles, couscous and similar farinaceous products | 11020 - Manufacture of wine from grape | 11040 - Manufacture of other non-distilled fermented beverages | 12000 - Manufacture of tobacco products | 13923 - manufacture of household textiles | 16210 - Manufacture of veneer sheets and wood-based panels | 17220 - Manufacture of household and sanitary goods and of toilet requisites | 19209 - Other treatment of petroleum products (excluding petrochemicals manufacture) | 20110 - Manufacture of industrial gases | 20412 - Manufacture of cleaning and polishing preparations | 22190 - Manufacture of other rubber products | 2222 - Printing not elsewhere classified | 23410 - Manufacture of ceramic household and ornamental articles | 25720 - Manufacture of locks and hinges | 25730 - Manufacture of tools | 25930 - Manufacture of wire products, chain and springs | 26520 - Manufacture of watches and clocks | 26701 - Manufacture of optical precision instruments | 26702 - Manufacture of photographic and cinematographic equipment | 28490 - Manufacture of other machine tools | 28940 - Manufacture of machinery for textile, apparel and leather production | 29201 - Manufacture of bodies (coachwork) for motor vehicles (except caravans) | 3162 - Manufacture other electrical equipment | 32130 - Manufacture of imitation jewellery and related articles | 33110 - Repair of fabricated metal products | 35300 - Steam and air conditioning supply | 3663 - Other manufacturing | 42130 - Construction of bridges and tunnels | 4534 - Other building installation | 45400 - Sale, maintenance and repair of motorcycles and related parts and accessories | 5010 - Sale of motor vehicles | 50300 - Inland passenger water transport | 5142 - Wholesale of clothing and footwear | 52102 - Operation of warehousing and storage facilities for air transport activities | 52241 - Cargo handling for water transport activities | 55202 - Youth hostels | 6330 - Travel agencies etc; tourist | 6420 - Telecommunications | 6603 - Non-life insurance/reinsurance | 7012 - Buying & sell own real estate | 7020 - Letting of own property | 7411 - Legal activities | 7440 - Advertising | 7450 - Labour recruitment | 77210 - Renting and leasing of recreational and sports goods | 81229 - Other building and industrial cleaning activities | 85530 - Driving school activities | 91011 - Library activities | 93210 - Activities of amusement parks and theme parks | 94200 - Activities of trade unions | 94920 - Activities of political organizations | 95220 - Repair of household appliances and home and garden equipment | 95250 - Repair of watches, clocks and jewellery | 01210 - Growing of grapes | 01290 - Growing of other perennial crops | 01490 - Raising of other animals | 03120 - Freshwater fishing | 10512 - Butter and cheese production | 10519 - Manufacture of other milk products | 10910 - Manufacture of prepared feeds for farm animals | 10920 - Manufacture of prepared pet foods | 13200 - Weaving of textiles | 14110 - Manufacture of leather clothes | 1589 - Manufacture of other food products | 18110 - Printing of newspapers | 18121 - Manufacture of printed labels | 20160 - Manufacture of plastics in primary forms | 20170 - Manufacture of synthetic rubber in primary forms | 2051 - Manufacture of other products of wood | 2121 - Manufacture of cartons, boxes & cases of corrugated paper & paperboard | 23510 - Manufacture of cement | 24200 - Manufacture of tubes, pipes, hollow profiles and related fittings, of steel | 24420 - Aluminium production | 2452 - Manufacture perfumes & toilet preparations | 2466 - Manufacture of other chemical products | 25120 - Manufacture of doors and windows of metal | 25940 - Manufacture of fasteners and screw machine products | 2625 - Manufacture of other ceramic products | 26600 - Manufacture of irradiation, electromedical and electrotherapeutic equipment | 27200 - Manufacture of batteries and accumulators | 27510 - Manufacture of electric domestic appliances | 28120 - Manufacture of fluid power equipment | 28930 - Manufacture of machinery for food, beverage and tobacco processing | 2954 - Manufacture for textile, apparel & leather | 30400 - Manufacture of military fighting vehicles | 30990 - Manufacture of other transport equipment n.e.c. | 42910 - Construction of water projects | 43110 - Demolition | 46230 - Wholesale of live animals | 46640 - Wholesale of machinery for the textile industry and of sewing and knitting machines | 47260 - Retail sale of tobacco products in specialised stores | 47300 - Retail sale of automotive fuel in specialised stores | 47520 - Retail sale of hardware, paints and glass in specialised stores | 49311 - Urban and suburban passenger railway transportation by underground, metro and similar systems | 49500 - Transport via pipeline | 5147 - Wholesale of other household goods | 5244 - Retail furniture household etc | 53100 - Postal activities under universal service obligation | 5510 - Hotels & motels with or without restaurant | 5530 - Restaurants | 6711 - Administration of financial markets | 7031 - Real estate agencies | 7412 - Accounting, auditing; tax consult | 77120 - Renting and leasing of trucks and other heavy vehicles | 77299 - Renting and leasing of other personal and household goods | 81291 - Disinfecting and exterminating services | 9220 - Radio and television activities | 9234 - Other entertainment activities | 9261 - Operate sports arenas & stadiums | 9271 - Gambling and betting activities | 95230 - Repair of footwear and leather goods | 95240 - Repair of furniture and home furnishings | 98100 - Undifferentiated goods-producing activities of private households for own use | 01240 - Growing of pome fruits and stone fruits | 01270 - Growing of beverage crops | 0141 - Agricultural service activities | 01440 - Raising of camels and camelids | 03210 - Marine aquaculture | 03220 - Freshwater aquaculture | 08110 - Quarrying of ornamental and building stone, limestone, gypsum, chalk and slate | 08920 - Extraction of peat | 10110 - Processing and preserving of meat | 10511 - Liquid milk and cream production | 10520 - Manufacture of ice cream | 10620 - Manufacture of starches and starch products | 10720 - Manufacture of rusks and biscuits; manufacture of preserved pastry goods and cakes | 10810 - Manufacture of sugar | 10822 - Manufacture of sugar confectionery | 11030 - Manufacture of cider and other fruit wines | 13910 - Manufacture of knitted and crocheted fabrics | 13931 - Manufacture of woven or tufted carpets and rugs | 13940 - Manufacture of cordage, rope, twine and netting | 15110 - Tanning and dressing of leather; dressing and dyeing of fur | 1533 - Process etc. fruit, vegetables | 1581 - Manufacture of bread, fresh pastry & cakes | 1596 - Manufacture of beer | 16100 - Sawmilling and planing of wood | 16240 - Manufacture of wooden containers | 16290 - Manufacture of other products of wood; manufacture of articles of cork, straw and plaiting materials | 17110 - Manufacture of pulp | 17211 - Manufacture of corrugated paper and paperboard, sacks and bags | 17240 - Manufacture of wallpaper | 1725 - Other textile weaving | 1730 - Finishing of textiles | 1752 - Manufacture cordage, rope, twine & netting | 18140 - Binding and related services | 1822 - Manufacture of other outerwear | 1824 - Manufacture other wearing apparel etc. | 2010 - Sawmill, plane, impregnation wood | 2030 - Manufacture builders' carpentry & joinery | 20302 - Manufacture of printing ink | 20510 - Manufacture of explosives | 20530 - Manufacture of essential oils | 2123 - Manufacture of paper stationery | 2125 - Manufacture of paper & paperboard goods | 2215 - Other publishing | 22210 - Manufacture of plastic plates, sheets, tubes and profiles | 22230 - Manufacture of builders ware of plastic | 23120 - Shaping and processing of flat glass | 23140 - Manufacture of glass fibres | 23200 - Manufacture of refractory products | 23310 - Manufacture of ceramic tiles and flags | 23630 - Manufacture of ready-mixed concrete | 23990 - Manufacture of other non-metallic mineral products n.e.c. | 24330 - Cold forming or folding | 24440 - Copper production | 2451 - Manufacture soap & detergents, polishes etc. | 24510 - Casting of iron | 25210 - Manufacture of central heating radiators and boilers | 25910 - Manufacture of steel drums and similar containers | 2611 - Manufacture of flat glass | 26513 - Manufacture of non-electronic measuring, testing etc. equipment, not for industrial process control | 2742 - Aluminium production | 28250 - Manufacture of non-domestic cooling and ventilation equipment | 28301 - Manufacture of agricultural tractors | 28302 - Manufacture of agricultural and forestry machinery other than tractors | 28960 - Manufacture of plastics and rubber machinery | 2924 - Manufacture of other general machinery | 2952 - Manufacture machines for mining, quarry etc. | 2971 - Manufacture of electric domestic appliances | 30910 - Manufacture of motorcycles | 32200 - Manufacture of musical instruments | 32401 - Manufacture of professional and arcade games and toys | 3310 - Manufacture medical, orthopaedic etc. equipment | 3350 - Manufacture of watches and clocks | 3420 - Manufacture motor vehicle bodies etc. | 3511 - Building and repairing of ships | 3612 - Manufacture other office & shop furniture | 38120 - Collection of hazardous waste | 43130 - Test drilling and boring | 4511 - Demolition buildings; earth moving | 4522 - Erection of roof covering & frames | 4531 - Installation electrical wiring etc. | 4532 - Insulation work activities | 4533 - Plumbing | 4543 - Floor and wall covering | 4550 - Rent construction equipment with operator | 46240 - Wholesale of hides, skins and leather | 46440 - Wholesale of china and glassware and cleaning materials | 46491 - Wholesale of musical instruments | 47230 - Retail sale of fish, crustaceans and molluscs in specialised stores | 5050 - Retail sale of automotive fuel | 5115 - Agents in household goods, etc. | 51220 - Space transport | 5134 - Wholesale of alcohol and other drinks | 5141 - Wholesale of textiles | 5143 - Wholesale electric household goods | 5154 - Wholesale hardware, plumbing etc. | 5170 - Other wholesale | 5212 - Other retail non-specialised stores | 52212 - Operation of rail passenger facilities at railway stations | 52213 - Operation of bus and coach passenger facilities at bus and coach stations | 5224 - Retail bread, cakes, confectionery | 52242 - Cargo handling for air transport activities | 5250 - Retail other secondhand goods | 59140 - Motion picture projection activities | 6010 - Transport via railways | 6312 - Storage & warehousing | 6323 - Other supporting air transport | 6340 - Other transport agencies | 6521 - Financial leasing | 6601 - Life insurance/reinsurance | 6712 - Security broking & fund management | 7032 - Manage real estate, fee or contract | 7134 - Rent other machinery & equip | 7222 - Other software consultancy and supply | 7260 - Other computer related activities | 7413 - Market research, opinion polling | 7484 - Other business activities | 8021 - General secondary education | 8042 - Adult and other education | 81221 - Window cleaning services | 82912 - Activities of credit bureaus | 9112 - Professional organisations | 9251 - Library and archives activities | 95120 - Repair of communication equipment | 95290 - Repair of personal and household goods n.e.c. | 47810 - Retail sale via stalls and markets of food, beverages and tobacco products | 17219 - Manufacture of other paper and paperboard containers | 23320 - Manufacture of bricks, tiles and construction products, in baked clay | 10130 - Production of meat and poultry meat products | 31030 - Manufacture of mattresses | 37000 - Sewerage | 38310 - Dismantling of wrecks | 01160 - Growing of fibre crops | 01450 - Raising of sheep and goats | 01470 - Raising of poultry | 02300 - Gathering of wild growing non-wood products | 10320 - Manufacture of fruit and vegetable juice | 1740 - Manufacture made-up textiles, not apparel | 2320 - Manufacture of refined petroleum products | 23620 - Manufacture of plaster products for construction purposes | 23690 - Manufacture of other articles of concrete, plaster and cement | 24520 - Casting of steel | 26514 - Manufacture of non-electronic industrial process control equipment | 27520 - Manufacture of non-electric domestic appliances | 28240 - Manufacture of power-driven hand tools | 3210 - Manufacture of electronic components | 42210 - Construction of utility projects for fluids | 4544 - Painting and glazing | 50400 - Inland freight water transport | 5144 - Wholesale of china, wallpaper etc. | 5152 - Wholesale of metals and metal ores | 64192 - Building societies | 7460 - Investigation & security | 84210 - Foreign affairs | 9211 - Motion picture and video production | 4545 - Other building completion | 5540 - Bars | 01190 - Growing of other non-perennial crops | 02200 - Logging | 8531 - Social work with accommodation | 08930 - Extraction of salt | 14390 - Manufacture of other knitted and crocheted apparel | 16220 - Manufacture of assembled parquet floors | 1823 - Manufacture of underwear | 23440 - Manufacture of other technical ceramic products | 28922 - Manufacture of earthmoving equipment | 32910 - Manufacture of brooms and brushes | 5131 - Wholesale of fruit and vegetables | 7420 - Architectural, technical consult | 77310 - Renting and leasing of agricultural machinery and equipment | 81223 - Furnace and chimney cleaning services | 8514 - Other human health activities | 9231 - Artistic & literary creation etc | 01120 - Growing of rice | 01460 - Raising of swine/pigs | 08120 - Operation of gravel and sand pits; mining of clays and kaolin | 10120 - Processing and preserving of poultry meat | 10611 - Grain milling | 11060 - Manufacture of malt | 13922 - manufacture of canvas goods, sacks, etc. | 13939 - Manufacture of other carpets and rugs | 14141 - Manufacture of men's underwear | 14200 - Manufacture of articles of fur | 1920 - Manufacture of luggage & the like, saddlery | 20120 - Manufacture of dyes and pigments | 2225 - Ancillary printing operations | 23110 - Manufacture of flat glass | 23490 - Manufacture of other ceramic products n.e.c. | 2415 - Manufacture fertilizers, nitrogen compounds | 2430 - Manufacture of paints, print ink & mastics etc. | 24430 - Lead, zinc and tin production | 24530 - Casting of light metals | 25290 - Manufacture of other tanks, reservoirs and containers of metal | 25300 - Manufacture of steam generators, except central heating hot water boilers | 25400 - Manufacture of weapons and ammunition | 25710 - Manufacture of cutlery | 25920 - Manufacture of light metal packaging | 26800 - Manufacture of magnetic and optical media | 2710 - Manufacture of basic iron & steel & of Ferro-alloys | 28410 - Manufacture of metal forming machinery | 29202 - Manufacture of trailers and semi-trailers | 2922 - Manufacture of lift & handling equipment | 3110 - Manufacture electric motors, generators etc. | 32110 - Striking of coins | 3622 - Manufacture of jewellery & related | 4523 - Construction roads, airfields etc. | 4542 - Joinery installation | 5114 - Agents in industrial equipment, etc. | 5145 - Wholesale of perfume and cosmetics | 5226 - Retail sale of tobacco products | 5227 - Other retail food etc. specialised | 5242 - Retail sale of clothing | 5245 - Retail electric h'hold, etc. goods | 7140 - Rent personal & household goods | 7210 - Hardware consultancy | 7221 - Software publishing | 7470 - Other cleaning activities | 7482 - Packaging activities | 8041 - Driving school activities | 84300 - Compulsory social security activities | 8512 - Medical practice activities | 8513 - Dental practice activities | 9500 - Private households with employees | 9800 - Residents property management | 5248 - Other retail specialist stores | 6022 - Taxi operation | 2212 - Publishing of newspapers | 25500 - Forging, pressing, stamping and roll-forming of metal; powder metallurgy | 27330 - Manufacture of wiring devices | 47741 - Retail sale of hearing aids | 5185 - Wholesale of other office machinery & equipment | 23430 - Manufacture of ceramic insulators and insulating fittings | 28923 - Manufacture of equipment for concrete crushing and screening and roadworks | 5231 - Dispensing chemists | 5247 - Retail books, newspapers etc. | 20520 - Manufacture of glues | 2112 - Manufacture of paper & paperboard | 2211 - Publishing of books | 24540 - Casting of other non-ferrous metals | 5020 - Maintenance & repair of motors | 5132 - Wholesale of meat and meat products | 5211 - Retail in non-specialised stores holding an alcohol licence, with food, beverages or tobacco predominating, not elsewhere classified | 5241 - Retail sale of textiles | 5552 - Catering | 77220 - Renting of video tapes and disks | 01220 - Growing of tropical and subtropical fruits | 10420 - Manufacture of margarine and similar edible fats | 2811 - Manufacture metal structures & parts | 28210 - Manufacture of ovens, furnaces and furnace burners | 28910 - Manufacture of machinery for metallurgy | 28921 - Manufacture of machinery for mining | 7110 - Renting of automobiles | 9272 - Other recreational activities nec | 5263 - Other non-store retail sale | 5186 - Wholesale of other electronic parts & equipment | 5184 - Wholesale of computers, computer peripheral equipment & software | 01640 - Seed processing for propagation | 1598 - Produce mineral water, soft drinks | 5261 - Retail sale via mail order houses | 9301 - Wash & dry clean textile & fur | 01230 - Growing of citrus fruits | 01260 - Growing of oleaginous fruits | 0130 - Crops combined with animals, mixed farms | 1010 - Mining & agglomeration of hard coal | 14310 - Manufacture of knitted and crocheted hosiery | 1717 - Preparation & spin of other textiles | 20600 - Manufacture of man-made fibres | 23520 - Manufacture of lime and plaster | 23910 - Production of abrasive products | 2513 - Manufacture of other rubber products | 2522 - Manufacture of plastic pack goods | 2524 - Manufacture of other plastic products | 2621 - Manufacture of ceramic household etc. goods | 2743 - Lead, zinc and tin production | 28132 - Manufacture of compressors | 28230 - Manufacture of office machinery and equipment (except computers and peripheral equipment) | 28950 - Manufacture of machinery for paper and paperboard production | 29203 - Manufacture of caravans | 2940 - Manufacture of machine tools | 2943 - Manufacture of other machine tools not elsewhere classified | 3630 - Manufacture of musical instruments | 4010 - Production | 4013 - Distribution & trade in electricity | 5111 - Agents agricultural & textile raw materials | 5116 - Agents in textiles, footwear etc. | 5118 - Agents in particular products | 5119 - Agents in sale of variety of goods | 5139 - Non-specialised wholesale food, etc. | 5153 - Wholesale wood, construction etc. | 5221 - Retail of fruit and vegetables | 52211 - Operation of rail freight terminals | 6120 - Inland water transport | 6210 - Scheduled air transport | 6322 - Other supporting water transport | 6511 - Central banking | 7230 - Data processing | 7240 - Data base activities | 7250 - Maintenance office & computing mach | 7430 - Technical testing and analysis | 7481 - Portrait photographic activities, other specialist photography, film processing | 8030 - Higher education | 9213 - Motion picture projection | 9262 - Other sporting activities | 9304 - Physical well-being activities | 23420 - Manufacture of ceramic sanitary fixtures | 0111 - Grow cereals & other crops | 05200 - Mining of lignite | 19100 - Manufacture of coke oven products | 23130 - Manufacture of hollow glass | 5113 - Agents in building materials | 5157 - Wholesale of waste and scrap | 9302 - Hairdressing & other beauty treatment | 10310 - Processing and preserving of potatoes | 1552 - Manufacture of ice cream | 3002 - Manufacture computers & process equipment | 6321 - Other supporting land transport | 6412 - Courier other than national post | 01150 - Growing of tobacco | 2923 - Manufacture non-domestic ventilation | 3140 - Manufacture of accumulators, batteries etc. | 3611 - Manufacture of chairs and seats | 4541 - Plastering | 5521 - Youth hostels and mountain refuges | 7122 - Rent water transport equipment | 7132 - Rent civil engineering machinery | 8022 - Technical & vocational secondary | 7133 - Rent office machinery inc computers | 01140 - Growing of sugar cane | 2956 - Manufacture other special purpose machine | 6023 - Other passenger land transport | 8532 - Social work without accommodation | 9003 - Sanitation remediation and similar activities | 1571 - Manufacture of prepared farm animal feeds | 5117 - Agents in food, drink & tobacco | 5156 - Wholesale other intermediate goods | 77352 - Renting and leasing of freight air transport equipment | 9600 - Undifferentiated goods producing activities of private households for own use | 24460 - Processing of nuclear fuel | 2862 - Manufacture of tools | 3520 - Manufacture of railway locomotives & stock | 5136 - Wholesale sugar, chocolate etc. | 5232 - Retail medical & orthopaedic goods | 3230 - Manufacture TV & radio, sound or video etc. | 5511 - Hotels & motels | 2874 - Manufacture fasteners, screw, chains etc. | 2416 - Manufacture of plastics in primary forms | 3410 - Manufacture of motor vehicles | 4012 - Transmission of electricity | 5225 - Retail alcoholic & other beverages | 5030 - Sale of motor vehicle parts etc. | 2851 - Treatment and coat metals | 5243 - Retail of footwear & leather goods | 1513 - Production meat & poultry products | 1583 - Manufacture of sugar | 1723 - Worsted-type weaving | 1910 - Tanning and dressing of leather | 2812 - Manufacture builders' carpentry of metal | 2912 - Manufacture of pumps & compressors | 3130 - Manufacture of insulated wire & cable | 4030 - Steam and hot water supply | 5138 - Wholesale other food inc fish, etc. | 5164 - Wholesale office machinery & equip | 5274 - Repair not elsewhere classified | 6311 - Cargo handling | 6411 - National post activities | 6522 - Other credit granting | 6713 - Auxiliary financial intermed | 8010 - Primary education | 2214 - Publishing of sound recordings | 9131 - Religious organisations | 0501 - Fishing | 23640 - Manufacture of mortars | 3320 - Manufacture instruments for measuring etc. | 5233 - Retail cosmetic & toilet articles | 7524 - Public security, law & order | 24310 - Cold drawing of bars | 5222 - Retail of meat and meat products | 5272 - Repair electrical household goods | 4020 - Mfr of gas; mains distribution | 3430 - Manufacture motor vehicle & engine parts | 2661 - Manufacture concrete goods for construction | 3710 - Recycling of metal waste and scrap | 3720 - Recycling non-metal waste & scrap | 6021 - Other scheduled passenger land transport | 6110 - Sea and coastal water transport | 7310 - R & d on nat sciences & engineering | 2223 - Bookbinding and finishing | 1110 - Extraction petroleum & natural gas | 2441 - Manufacture of basic pharmaceutical prods | 2681 - Production of abrasive products | 2821 - Manufacture tanks, etc. & metal containers | 3541 - Manufacture of motorcycles | 5135 - Wholesale of tobacco products | 5223 - Retail of fish, crustaceans etc. | 6720 - Auxiliary insurance & pension fund | 2413 - Manufacture other inorganic basic chemicals | 5146 - Wholesale of pharmaceutical goods | 0150 - Hunting and game rearing inc. services | 1320 - Mining non-ferrous metal ores | 1711 - Prepare & spin cotton-type fibres | 1713 - Prepare & spin worsted-type fibres | 1721 - Cotton-type weaving | 1751 - Manufacture of carpets and rugs | 2231 - Reproduction of sound recording | 23650 - Manufacture of fibre cement | 24340 - Cold drawing of wire | 2612 - Shaping & process of flat glass | 2733 - Cold forming or folding | 2873 - Manufacture of wire products | 3340 - Manufacture optical, photographic etc. equipment | 3640 - Manufacture of sports goods | 5112 - Agents in sale of fuels, ores, etc. | 6220 - Non-scheduled air transport | 7514 - Support services for government | 9002 - Collection and treatment of other waste | 9232 - Operation of arts facilities | 9303 - Funeral and related activities |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|